Data Quality can be described as the timeliness, accuracy, consistency, and completeness of information. There are different types of data, such as customer data, which need different approaches to ensure their quality. First-party data, or data collected directly from the customer, is relatively straightforward. This data can be reasonably trusted, but it needs to be collected with a clear plan to ensure its validity, consistency, and relevance.

Data timeliness

Data timeliness and data quality are vital to the efficient use of ESSENCE data in surveillance and research. However, data quality issues are often not obvious. For example, ESSENCE reports that data from some facilities is delayed or missing. This can have a negative impact on the usefulness of the data for surveillance and research. In some cases, data can be days or even weeks late depending on which facility is offline or processing the data.

To improve data timeliness and data quality, ADPH SyS analysts employed a multifaceted approach. By analyzing NSSP reports, R-programs, and Excel tracking, the team was able to improve the quality and timeliness of data from 82 facilities.

Data consistency

When data is collected from several sources, it is critical to check the consistency of the data. This can be accomplished by using regular expressions, a method that allows data to be recognized by its granularity. For example, if a dataset contains data about patients undergoing a surgery, it is necessary to compare each patient’s data to a known number of other patients.

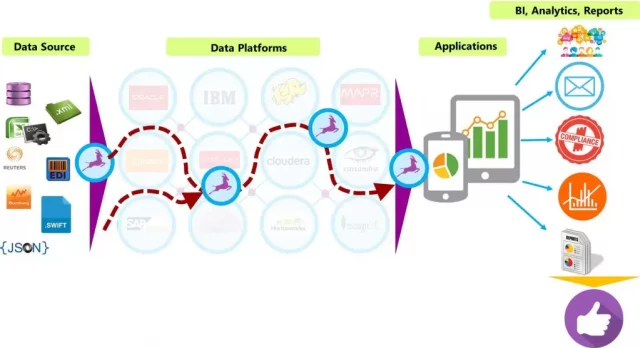

The enterprise landscape is extremely complex, with thousands of disparate systems trying to interoperate. Each of these systems stores vast amounts of data and is often structured in different ways. They also have different business rules and data taxonomies. As a result, relevant data may be represented in different ways in different systems, causing a lack of data consistency.

Data accuracy

Data accuracy and Data Quality are critical to the successful running of businesses and organizations. The failure to properly manage data can result in disastrous consequences. For example, poor data accuracy can result in the wrong booking of a criminal, costly mistakes in patient care, and violations of sanctions lists and rules. The accuracy of a database is one of the most important factors in determining its usability and value.

Data accuracy is measured by how closely it represents real world objects and events. For example, if a website changes its URL, data stored on it will no longer be accurate. It is also important to use spell checkers and data validation rules to reduce the chances of data errors. Additionally, data accuracy may be affected by data decay, which occurs when information becomes outdated and irrelevant.

Data completeness

Data completeness and data quality are key metrics that help you determine the quality of data. Data completeness is a measure of the availability of the data on hand. For example, you may have 18 cells with content and two with no data. Checking data completeness will ensure that the data that is stored in the target system matches the data that you need. This involves validating counts and actual data, as well as using simple transformations to ensure that no data is missing.

Data quality and completeness are also important factors in data integrity. The data should be consistent, accessible, and accurate. The data must also be safe and secure. These two metrics are interrelated.

Data uniqueness

Uniqueness of data quality refers to how unique information is in a database. This means that the same piece of data does not appear more than once. For example, if a company needs to track competitors, it will need unique data to do this. Uniqueness can be measured in two ways: through a static assessment using duplicate analysis, or through an ongoing monitoring process using identity matching.

Consistency is another important factor in data quality. When data is inconsistent across multiple data stores, you run the risk of storing data incorrectly. This is a common problem when the business definitions for different data are not consistent. For example, a dataset for may contain the following values: Male, Female, Unknown. Whether the data values are identical is not a big issue, but when there are differences, you may need to add additional data values or change the granularity.

Importance

High data quality can benefit any organization in many ways. It can increase employee productivity and cut down on time spent fixing and validating errors. It can also help avoid costly fines and comply with regulations. Data of high quality can help a company increase profitability and keep customers happy. It can also help prevent strategic and operational mistakes.

High data quality can be determined by identifying the attributes of data that meet its intended purpose. It also means that it represents real-world constructs. For example, the master data record for a customer could contain enough information to bill the customer, but if the customer details aren’t up to par, the business will face a problem.

Apart from that if you want to know about Increase Brand Awareness Online then please visit our Tech category